In Correspondence #50

More from Hilma and tips for the LLM connoisseur

Dear friends,

Welcome to the latest In Correspondence. I’m writing from Gothenberg, where yesterday I completed the ÖTILLÖ Gothenburg Sprint swimrun (3.9km swim in 17 sections, 18.5km run in 18 sections) in 5h11m 🎉

On Wednesday I head to the UK for a week. In London on Tue 5th Aug? Come hang out at Technofeudalism, Network Nations and the Future of Dandelion

Scroll down for some tips for the LLM connoisseur.

What I’ve been up to

- and I published Chapter 1: The Techno-Skeptics and Chapter 2, part 1: The Techno-Optimists: e/acc of Technological Metamodernism

I spent a very full month at Emerge Lakefront, including celebrating midsummer and participating in a week-long residency on Network Nations

Laura and I holidayed for a week in Skåne, where highlights included the coastal Stenshuvud National Park, Ales Stenar (the ‘Swedish Stonehenge’), cute Österlen villages lined with wild hollyhocks and Malmö’s lively Folkets Park

Plans for the future

The start of the Planet.Health residency at Emerge Lakefront, co-organised by brilliant friend Dr Rita Issa

A week catching up with friends in Devon

Another swimrun on Åland, 9 Aug

A Swedish B2 course at Folkuniversitet, 18-29 Aug

Spark Changemakers on Ekskäret, 9-13 Sep

Deep Joy: A Samadhi Retreat with Yahel Avigur and Sari Markkanen in Finland, 15-22 Sep

Some tips for the LLM connoisseur

EQ-Bench Longform Creative Writing is one of the most meaningful AI benchmarks to me. Sonnet 3.7 ranks above Sonnet 4, with a slop score second only to Opus 4, which fits with the fact that Sonnet 3.7 (thinking) is still my favourite AI writing partner (adjusting for cost – I can’t justify the huge extra cost of Opus 4 for the marginal benefit).

The Chinese open-source labs have been absolutely cooking though, with Kimi K2, Qwen3 235B A22B Thinking 2507 and Qwen3 Coder looking incredibly strong.

To see what models have product-market fit, check OpenRouter’s weekly chart. To see what’s hot, check the trending chart.

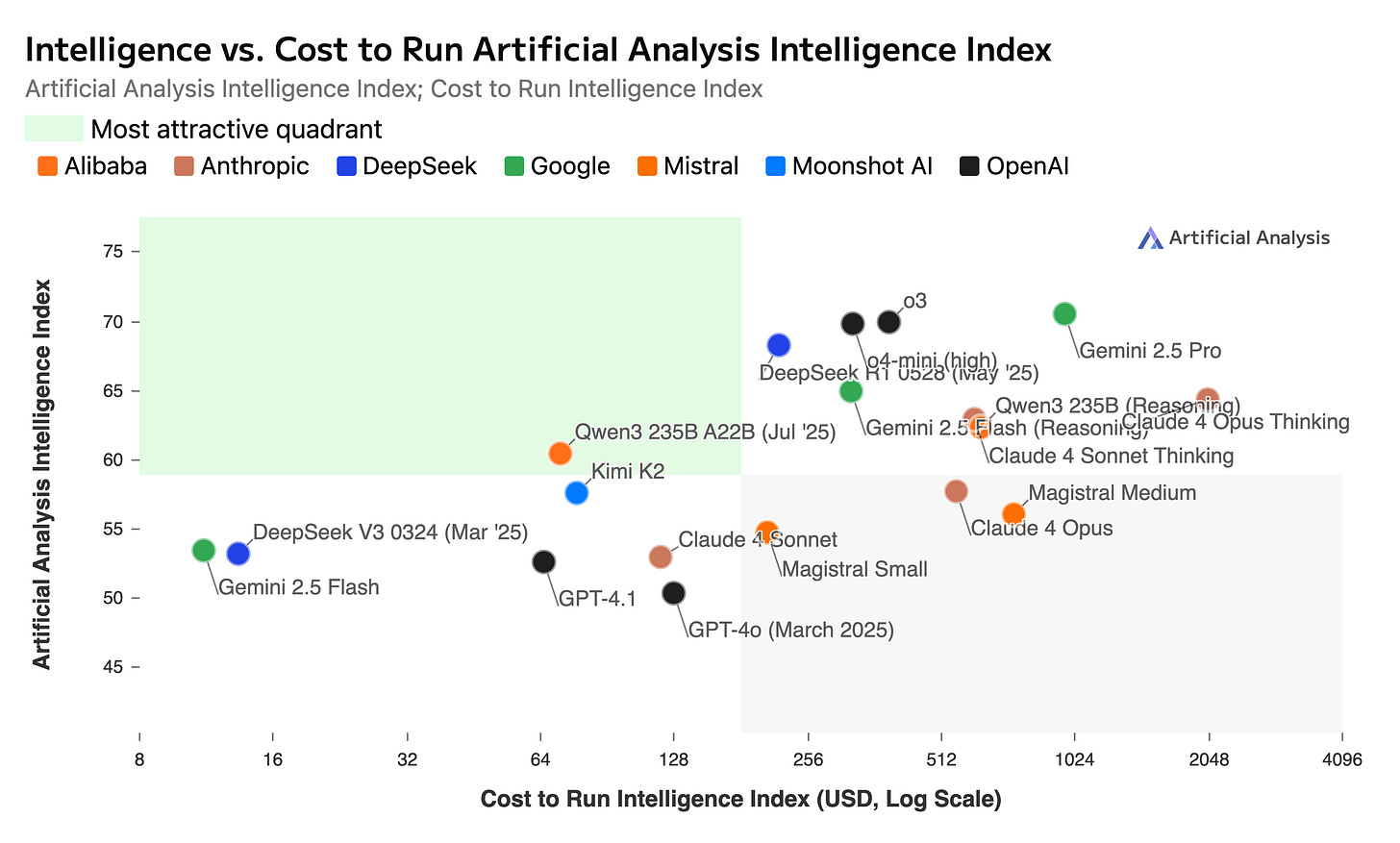

Don’t compare the cost of reasoning and non-reasoning models using per-token pricing, as (by design) reasoning models output way more tokens. A better indicator of cost is the Cost to Run Artificial Analysis Intelligence Index (note the log scale on the x-axis).

Dandelion’s current workhorse is Gemini 2.5 Flash, in standard and thinking variants.

Note: Qwen3 235B (Reasoning) shown here is the original version, not the just-released 2507 version. I expect the 2507 version to be on the intelligence-cost frontier.

Offerings from friends

Dandelion is closing on £5m tickets sold. Why not list your next event there?

Breadchain friends will be at the Crypto Commons Gathering in Austria at the Commons Hub, 24-29 Aug 🍞

Join jae spencer-keyse, Jan Baeriswyl and Dominique Antonina for the fourth Futurecraft Residency, this time taking place at The Garden near Porto, Portugal, 2-8 Sep 🌿

Life Itself’s Second Renaissance proto-festival, France, 5-7 Sep and 3-month Practicum, Sep-Dec

Beatrix Bliss is co-facilitating the Mountain Medicine Meditation psilocybin retreat in Portugal, 12-19 Nov, with bursaries available on request 🍄🟫

Until next time, mettā,

Stephen

p.s. I love to hear from readers. Why not hit reply and let me know what you found useful or interesting?

Hey Stephen, I have been editing and creating some new structure into a longer work of mine (book length). Do you have any advice which LLM would be most suitable for these purposes? I am really ignorant what's out there.